Piercing the Black Box: ELI5

Introduction

One common criticism of machine learning and AI is that the reasoning behind its output can be obscure. This is what's known as the “black box” of AI. However, despite this common misconception, AI & machine learning models can be understood through a variety of techniques that examine the importance of the features that go into a model. Methodologies such as SHapley Additive exPlanations (SHAP), Local Interpretable Model-Agnostic Explanations (LIME), and Explain Like I’m Five (ELI5) can be used to pierce the black box. In this blog post, we will discuss how ELI5 can be used to provide digestible insights into how AI models make their predictions.

ELI5: Explainable AI

ELI5 (Explain Like I'm 5) is a Python library designed to provide clear, easy-to-understand explanations of machine learning models and their predictions. It offers various tools for interpreting and visualizing the inner workings of models, making it easier for non-technical stakeholders to grasp the decisions made by complex algorithms. ELI5 is particularly useful for explaining different types of machine learning tasks, including text classification, traditional classification, named entity recognition, and image classification.

Text Classification

For text classification tasks, ELI5 can demystify the decision-making process of a model by showing which words or phrases in the text are influencing the prediction. This is especially useful when dealing with models that use intricate features such as word embeddings or TF-IDF vectors. ELI5 generates visualizations that highlight the most important terms, making it easier to understand why a model has classified a piece of text in a particular way.

In this example, a model classifies the occurrence of certain topics/themes from a text. ELI5 displays the probability of each classification and highlights the words that contribute most to the model's decision. Words like "medication" and "kidney" push the prediction towards a “medical” topic, while words like "broken" and "bout" contribute against that topic. This visualization helps stakeholders understand which aspects of the text are driving the model's predictions.

Traditional Classification

ELI5 is also adept at explaining traditional classification tasks that involve tabular data. It can reveal which features of the dataset are most influential in the model's decision-making process. This is done through feature importance visualizations, where each feature's contribution to the prediction is clearly displayed.

In this case, the model predicts the likelihood of someone surviving the Titanic based on certain attributes of that person. ELI5 shows the classification probabilities and highlights features such as "Sex" and "Fare" that heavily influence the prediction. This helps data scientists and researchers understand the key factors in the Titanic dataset.

Named Entity Recognition (NER)

Named Entity Recognition (NER) models identify and classify entities in text, such as names, dates, and locations. ELI5 can explain the inner workings of NER models by showing which parts of the text contribute to the identification of each entity. This is particularly useful for verifying that the model is correctly identifying entities and not being misled by irrelevant information.

In this example, a model shows which parts of speech (LOC, ORG, PER, etc.) trend towards transitioning towards another part of speech. So we can see that the “B-LOC” part of speech tag is usually followed by the “I-LOC” part of speech. This visualization helps ensure that the model's entity recognition aligns with human expectations.

Image Classification

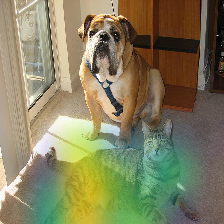

For image classification tasks, ELI5 provides explanations by identifying which parts of an image are most important for the model's classification. This is achieved by highlighting image segments that significantly influence the model's predictions, making it easier to understand how the model interprets visual data.

In this case, a model classifies images of animals to determine which sections are a cat or dog. ELI5 highlights areas of the image, such as the animal's face and body, that are crucial for the classification. This helps stakeholders understand which features of the image the model relies on, ensuring transparency in the decision-making process.

Conclusion

ELI5 is a powerful tool for interpreting and explaining machine learning models across various use cases. Whether dealing with text classification, traditional classification, named entity recognition, or image classification, ELI5 provides clear and intuitive visualizations that make complex models more accessible. At Delphi Intelligence, we utilize ELI5 and other interpretability tools to ensure our models are not only accurate but also transparent and understandable to all stakeholders. By leveraging ELI5, we can enhance trust and confidence in our machine learning solutions, bridging the gap between technical and non-technical audiences.