Piercing the Black Box: LIME

Introduction

One common criticism of machine learning and AI is that the reasoning behind its output can be obscure. This is what's known as the “black box” of AI. However, despite this common misconception, AI & machine learning models can be understood through a variety of techniques that examine the importance of the features that go into a model. Methodologies such as SHapley Additive exPlanations (SHAP), Local Interpretable Model-Agnostic Explanations (LIME), and Explain Like I’m Five (ELI5) can be used to pierce the black box. In this blog post, we will discuss how LIME can be used to provide digestible insights into individual classifications.

LIME: How it Works

Local Interpretable Model-agnostic Explanations (LIME) works in a model-agnostic fashion. This means that it can interpret a model’s result irrespective of the algorithm or underlying feature engineering steps taken to produce the classification. It does this by slightly tweaking the human-understandable inputs used by the model and seeing how that changes the model’s classification. In perturbing various parts of the inputs and looking at how the model’s classification changes, LIME can produce a simpler more explainable model for interpreting one specific classification.

Text-based Models

For text classification, LIME works even when the model uses complex features such as word vector embeddings. It’s able to do this by changing the words and gauging how the model’s classification changes.

Let’s look at an example of how LIME can be used to explain text classifications:

This example shows a model that classifies text as being more sincere or insincere. It shows the model’s prediction, (25% probability for sincere, 75% probability for insincere), as well as which words in the text are influencing the model towards one classification or the other. It also highlights the original text sections to showcase how LIME is interpreting the model’s decisions.

Tabular-based Models

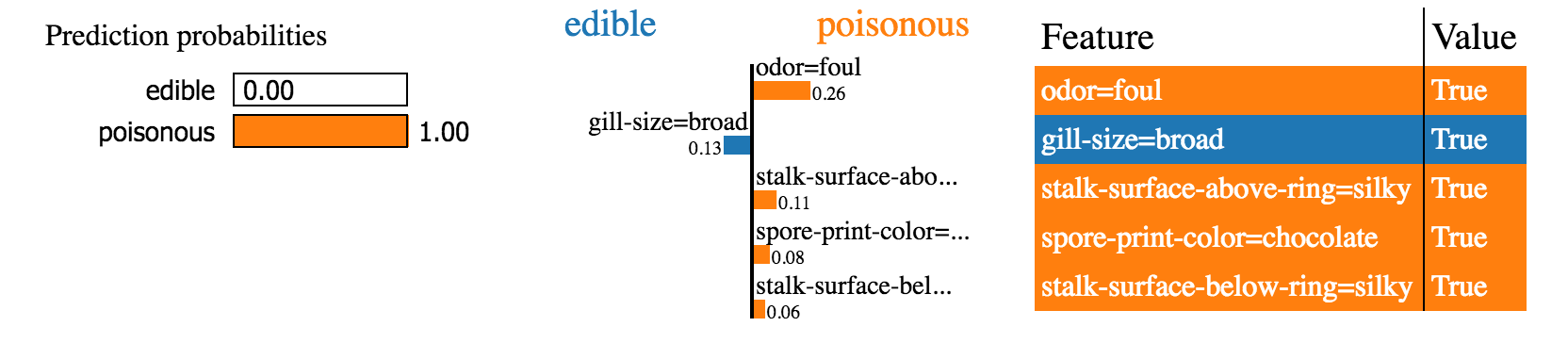

The following example shows LIME at work with a model that uses tabular data in a more traditional machine learning use case.

In this case, the model is tasked with predicting whether the given flora is edible or poisonous based on some characteristics of the specimen. Like with the previous textual example, LIME displays the model’s classification probabilities on the left, while also showcasing which features are contributing towards a particular classification on the right.

Image-based Models

For image classification tasks, LIME tweaks segments of the image to gauge which parts of the image are critical for the model to make its classification. This enables LIME to highlight which sections of an image contribute the most towards classifications.

Conclusion

While SHAP provides global interpretations of a model's behavior across an entire dataset, LIME focuses on explaining individual predictions. SHAP offers broad model insights, whereas LIME examines one classification at a time. By utilizing LIME and other feature analysis and model explanation techniques, we can gain a broader understanding of why the models make their predictions and thus gain more confidence in them. At Delphi Intelligence, we pride ourselves in not just creating efficient and precise models, but also in providing transparency and explainability to them.

Sources: